Sys.fn_xe_file_target_read_file(N'C:\Logs\Deadlocks*.xel', NULL, NULL, NULL)ĭATEADD(HOUR, 'datetime2')) AS , IF (OBJECT_ID('tempdb.#xml_deadlock') IS NOT NULL) DROP TABLE #xml_deadlockĭATEADD(HOUR, CAST(timestamp_utc AS DATETIME2)) AS Dt_Evento, DATETIME2 = ISNULL((SELECT MAX(Dt_Log) FROM dbo.Historico_Deadlocks WITH(NOLOCK)), INT = DATEDIFF(HOUR, GETUTCDATE(), GETDATE()) IF (OBJECT_ID('dbo.Historico_Deadlocks') IS NULL) Passo 5 - Vou tentar travar a Tabela (já possui lock na outra sessão) Passo 6 - Ao tentar travar a Tabela 1, irá ocorrer o deadlock If there are still deadlocks in the instance, it will eliminate more sessions and shorten the next execution cycles in 100ms (each cycle) until no deadlock is detected. When the Deadlock Monitor Thread drops a session due to deadlock, it runs again immediately to verify that the deadlock has been resolved. If the sessions have the same priority and the same cost, the victim of the deadlock will be chosen at random. How does SQL Server decide which session it will drop? It is very simple, it will always eliminate the session that has the lowest cost (generally, the one that was “locked” last), thus facilitating the rollback of transactions performed by the session that was chosen to be disconnected (deadlock victim) As long as they have the same priority. If it encounters any deadlock, it will kill one of the deadlocked sessions to free the locked resources for the other waiting session. This thread fires every 5 seconds to check for deadlocks on the instance. If you query the sys.dm_os_waiting_tasks DMV, you will notice that there is always a system task with the REQUEST_FOR_DEADLOCK_SEARCH event. In SQL Server there is a feature called Deadlock Monitor Thread, which runs in the background to identify and “help” resolve deadlocks in the instance, thus preventing the sessions from endlessly waiting for each other. Career, Courses and Certifications (35).Now we see that this statement does not lead to deadlock, but the second session will eventually time out because session one was not committed or rolled back. | parent | RECORD | S,REC_NOT_GAP | 2 | GRANTED | | parent | RECORD | S,REC_NOT_GAP | 3 | GRANTED | | parent | RECORD | X,REC_NOT_GAP | 1, 1 | GRANTED | If we look at the trigger in the where section This makes reads consistent and therefore makes the replication between servers consistent. When we run the same query twice, we get the same result, regardless other session modifications on that table. What does a gap lock mean? A gap lock is a lock on a gap between index records, or a lock on the gap before the first or after the last index record. We want to read the records that are not touched by the second session. | parent | RECORD | S | supremum pseudo-record | GRANTED | | id | parent_name | child_id | child_name |

Mysql> select * from parent where id1 for share Session one locks two rows in the parent table, one of which is a gap lock.

| parent | RECORD | X,GAP | 2, 2 | GRANTED | | parent | RECORD | X,REC_NOT_GAP | 1 | GRANTED | | child | RECORD | X,REC_NOT_GAP | 1 | GRANTED | | parent | RECORD | X,REC_NOT_GAP | 1 | WAITING | | object_name | lock_type | lock_mode | lock_data | lock_status |

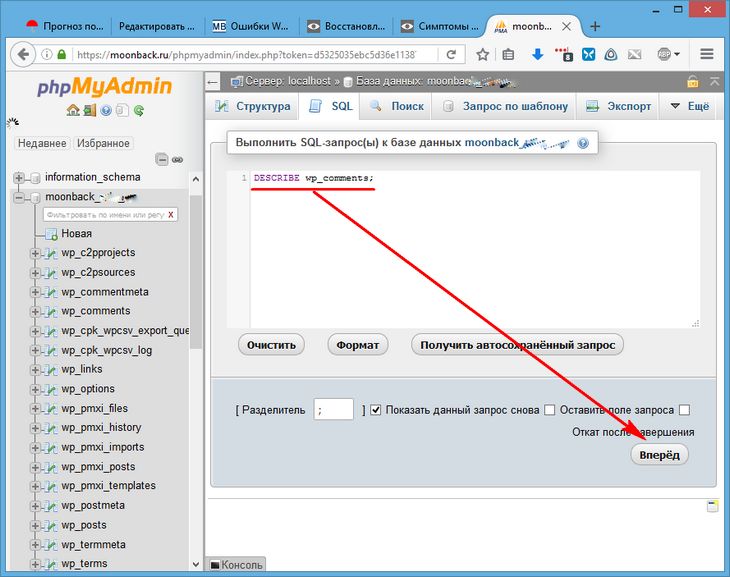

Mysql> SELECT object_name, lock_type, lock_mode, lock_data, lock_status FROM performance_schema.data_locks Mysql> update parent set parent_name='parent2' where id=1 We’re gonna add a column to the parent table. Please check this article in order to prepare your setup. So now that we found a valid case for using triggers, let’s see what we need to be careful when using them. And in the same time we don’t want to work on the monolith to add more code which would support this migration, so the obvious choice would be to use database triggers. Of course in order to do this we need to synchronise the data. We want to keep the monolith working but in the same time prepare the new services so that we can do a canary release at some point. So, we’re not going with a bing bang approach but with incremental changes. One case for this would be refactoring your monolith to microservices and part of that is this database migration. Or you could break one table in multiple tables, in order to separate domain concepts. Let’s say that part of the migration you create new separate schema which you plan to use it for the new version. But if you plan to do a database migration you may find them handy. Who uses triggers you may wonder? That’s a valid question.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed